and the distribution of digital products.

DM Television

Revisiting Euclidean Normalization: A Second Look

3. Revisiting Normalization

3.1 Revisiting Euclidean Normalization

4 Riemannian Normalization on Lie Groups

5 LieBN on the Lie Groups of SPD Manifolds and 5.1 Deformed Lie Groups of SPD Manifolds

7 Conclusions, Acknowledgments, and References

\ APPENDIX CONTENTS

B Basic layes in SPDnet and TSMNet

C Statistical Results of Scaling in the LieBN

D LieBN as a Natural Generalization of Euclidean BN

E Domain-specific Momentum LieBN for EEG Classification

F Backpropagation of Matrix Functions

G Additional Details and Experiments of LieBN on SPD manifolds

H Preliminary Experiments on Rotation Matrices

I Proofs of the Lemmas and Theories in the Main Paper

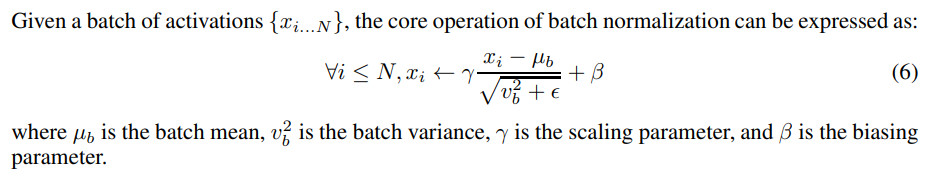

3.1 REVISITING EUCLIDEAN NORMALIZATIONIn Euclidean DNNs, normalization stands as a pivotal technique for accelerating network training by mitigating the issue of internal covariate shift (Ioffe & Szegedy, 2015). While various normalization methods have been introduced (Ioffe & Szegedy, 2015; Ba et al., 2016; Ulyanov et al., 2016; Wu & He, 2018), they all share a common fundamental concept: the regulation of the first and second statistical moments. In this paper, we focus on batch normalization only.

\

\

:::info This paper is available on arxiv under CC BY-NC-SA 4.0 DEED license.

:::

:::info Authors:

(1) Ziheng Chen, University of Trento;

(2) Yue Song, University of Trento and a Corresponding author;

(3) Yunmei Liu, University of Louisville;

(4) Nicu Sebe, University of Trento.

:::

\

- Home

- About Us

- Write For Us / Submit Content

- Advertising And Affiliates

- Feeds And Syndication

- Contact Us

- Login

- Privacy

All Rights Reserved. Copyright , Central Coast Communications, Inc.