and the distribution of digital products.

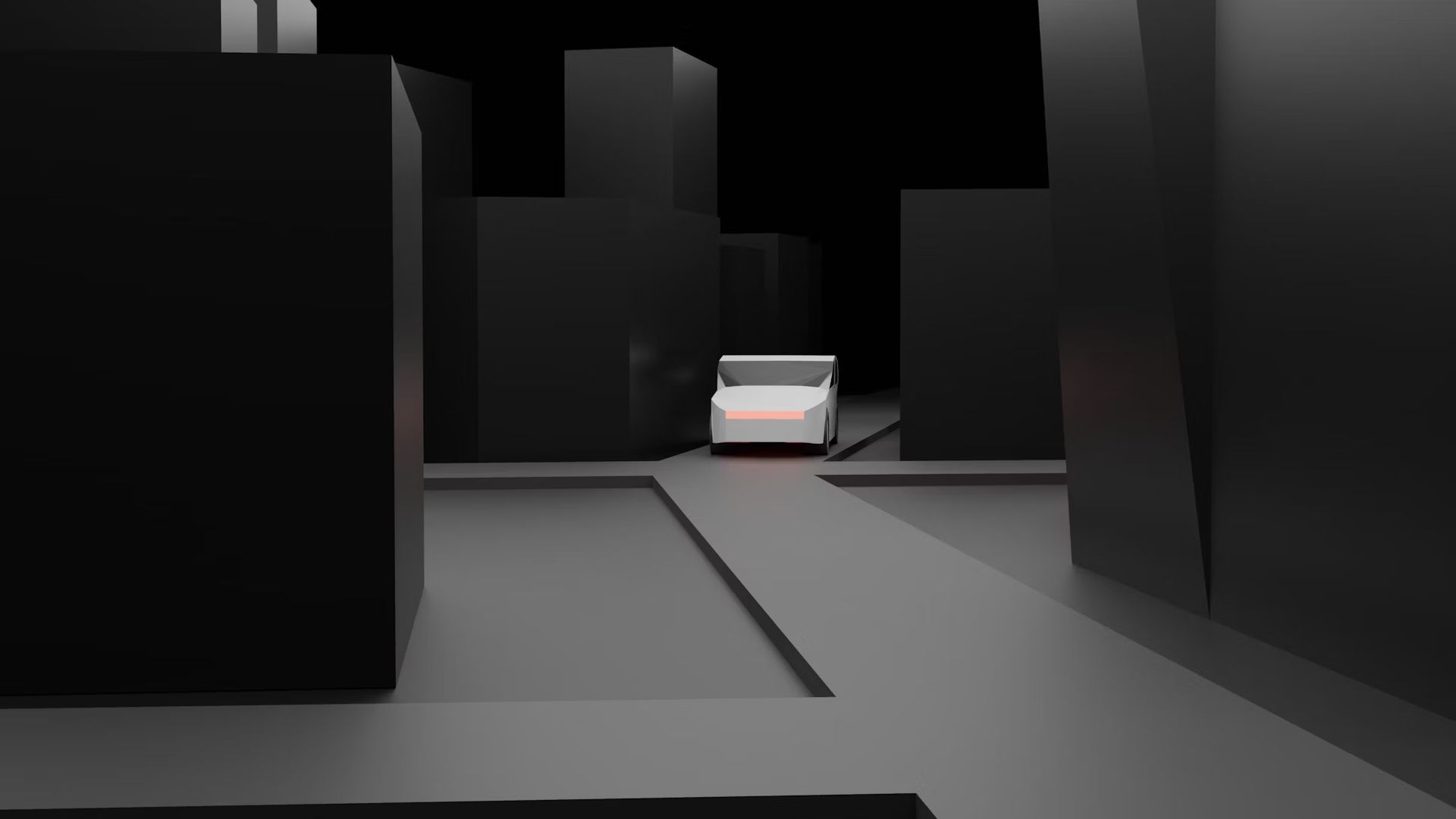

The ethical dilemmas of autonomous cars: Who’s responsible in a crash?

Imagine you’re in a car that’s driving itself. You’re sitting in the backseat, playing a game on your phone, when suddenly, the car makes a quick decision to swerve and avoid hitting a dog that ran into the street. But in doing so, it bumps into another car. Here’s the big question: Who’s responsible for that crash? Is it you, the company that made the car, or maybe the software that controls the car?

This is what people are trying to figure out as self-driving cars, also called autonomous cars, become more common. There are tons of ethical and legal questions about who’s in charge when these cars get into accidents, and it’s not always an easy answer.

How do autonomous cars make decisions?To understand the problem, we first have to look at how autonomous cars work. These cars use artificial intelligence (AI) to drive. Think of AI as a super-smart brain that processes all kinds of information, like how fast the car is going, where other cars are, and what the traffic lights are doing. The car uses sensors, cameras, and something called LIDAR (which is like radar but with lasers) to “see” its surroundings.

But here’s the tricky part: sometimes the car has to make tough decisions. Let’s say a car is driving toward a crosswalk and suddenly two people jump into the road. The car has to decide who or what to protect – the people in the crosswalk or the passenger inside the car. This is a big ethical question, and people are still figuring out how to teach the car’s AI to make those decisions.

It’s kind of like the “trolley problem”, which is a famous ethical puzzle. In the problem, a trolley is speeding toward five people, and you have the power to switch the track to save them but would hurt one person on the other track. There’s no right answer, and that’s exactly what makes it so complicated for autonomous cars.

Who’s at fault in a crash?Now let’s talk about responsibility. In a normal car crash, it’s pretty clear who’s to blame, it’s usually one of the drivers. But in an autonomous car, there might not be a driver at all, or the person in the car might not even be paying attention when the crash happens. So who’s responsible?

There are a few options:

- The Manufacturer: If the crash happened because something in the car malfunctioned, like if the brakes didn’t work, then the company that made the car could be held responsible.

- The Software Developer: If the problem was with the AI’s decision-making, the company that designed the software might be blamed. This could happen if the car made the wrong choice in an emergency situation.

- The Human Passenger: Some self-driving cars still require people to be ready to take control if something goes wrong. If a crash happens and the human wasn’t paying attention, they could be held accountable.

- Third-party Companies: Sometimes, a different company might be in charge of maintaining the cars (like a ride-sharing service that uses autonomous cars). If something wasn’t fixed properly, they could be responsible for the crash.

A real-life example of this happened in 2018 when an Uber self-driving car hit and killed a pedestrian. In that case, it turned out the safety driver wasn’t paying attention, but the car’s AI didn’t act quickly enough either. It’s still a huge debate about who was really to blame.

Legal helpWhen you’re involved in a crash, whether it’s a regular car or an autonomous one, it can be tough. You might not know who’s to blame or how to deal with the legal system. This is where hiring a car accident attorney can help.

An experienced attorney can figure out if the crash was due to a car malfunction, a software error, or even another driver’s negligence. They’ll know how to gather evidence and deal with insurance companies, which is important when determining who should pay for damages. Plus, if the case goes to court, they’ll be able to represent you and make sure you get a fair outcome.

They can also guide you on what to do immediately after the crash. How to file a police report, how to document the scene, or when to seek medical evaluations. This ensures you don’t miss any important steps that could affect your case down the road.

The AI’s tough decisionsLet’s go back to the idea of the car making decisions. Picture this: a self-driving car is about to hit a deer that suddenly jumps onto the road. The AI has a split second to decide—should it swerve to avoid the deer and possibly crash into another car, or should it stay on course and hit the animal?

It’s not always clear how AI should be programmed to handle these situations. In one country, people might think the car should always protect human life above all else. In another place, people might argue that the car should minimize the damage to as many people as possible, even if that puts the passengers at risk. And these decisions can change based on different cultures or even individual preferences.

Right now, companies building these cars are trying to create ethical guidelines to help the AI make these kinds of decisions. But it’s super complicated, because no one really agrees on the “right” answer to these problems.

Can we trust autonomous cars?A big part of the debate about self-driving cars is trust. People want to know if they can rely on these cars to make the right decisions in emergencies. And trust is hard to earn, especially when crashes still happen.

One way companies can build trust is by being transparent. That means they need to be open about how their cars work and what kinds of decisions the AI is programmed to make. When people understand what’s going on under the hood, they’re more likely to feel safe.

But it’s also scary to think about letting a machine make life-and-death decisions for us. Even though autonomous cars are generally safer than human drivers (since humans are distracted or make mistakes more often), it’s still tough to give up control entirely.

Companies like Tesla and Waymo claim their technology is safer than human drivers, but there’s still a lot of skepticism. A 2022 survey showed that only 9% of Americans say that they trust self-driving cars, while 68% say they are afraid of them.

How are governments and companies handling it?Governments around the world are trying to come up with rules to help guide autonomous cars. In the U.S., the Department of Transportation has released guidelines for self-driving vehicles, but the laws are still catching up to the technology.

Some people suggest that car manufacturers should have to pay into an insurance fund that covers accidents caused by autonomous vehicles. Others think we need stronger regulations on how companies test their AI systems before putting them on the road.

On the company side, the responsibility falls on both the carmakers and the software developers. They need to work together to make sure that the AI is safe and that the cars can handle all sorts of real-world situations.

In summaryAt the end of the day, the question of who’s responsible when an autonomous car crashes is still a big puzzle. It’s clear that there’s no one-size-fits-all answer, and the way we handle these situations will depend on what kind of legal and ethical systems we set up.

As autonomous cars continue to grow in number, we’ll need to figure out how to balance safety, trust, and accountability. Whether it’s the carmaker, the software developer, or even the human passenger, everyone involved has a role to play in making sure these vehicles are safe for everyone on the road.

It’s a big task, but if we get it right, self-driving cars could be a huge step forward in making our roads safer.

Featured image credit: Shubham Dhage/Unsplash

- Home

- About Us

- Write For Us / Submit Content

- Advertising And Affiliates

- Feeds And Syndication

- Contact Us

- Login

- Privacy

All Rights Reserved. Copyright , Central Coast Communications, Inc.